The Wow Factor: Science, Technology & the Movies

If you’ve been reading my media literacy columns, then you might already know that media literacy involves both analyzing and creating media messages.

If you’ve been reading my media literacy columns, then you might already know that media literacy involves both analyzing and creating media messages.

When we engage students in both, we help them appreciate all that goes into making messages and how they work.

The STEAM (STEM+Art) movement similarly is designed to help students make the connections in all of these disciplines (often through hands-on learning) and challenge them to consider how important these subjects are as they begin to explore their career choices.

Although this column about the science and technology behind the movies is not specifically about science fiction films, they are popular with some teachers who want to help their students better understand what is authentic (and what is not) in this genre. Since media literacy also involves “critical thinking,” studying and questioning what we see in sci-fi movies has merit.

Mark Ruffalo on The Avengers set in his Hulk motion capture suit.

Mark Ruffalo on The Avengers set in his Hulk motion capture suit.

Film has always been an attractive medium, especially in our nation’s classrooms. Film can be a powerful medium, and it can stimulate discussion, debate, research and much more. As movie-making transitions from old analog technologies like chemical-based film to ever advancing computer-driven techniques that meet the expectations of 21st century audiences, the learning opportunities around media literacy, art, science and tech grow accordingly. That’s what I want to explore in this column.

The Changing Face of Filmmaking

If you’ve followed recent developments, you may know that many movie makers have abandoned film for digital. One of the reasons is cost. (The documentary Side by Side, produced by Keanu Reeves, explores this transition even further.)

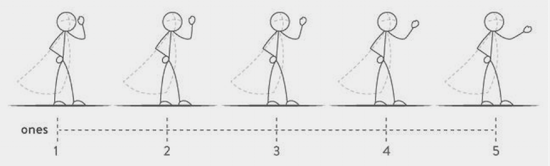

Ever since the invention of cinema, movies have been shot at 24 frames-per-second (fps). That means that 24 individual pictures move through the film camera and film projector to comprise one second. When we watch a movie projected from film our eyes don’t see those 24 separate images – rather, we see fluid motion. And the reason we don’t see those 24 is called “persistence of vision,” a scientific principle.

Persistence of vision “refers to the optical illusion whereby multiple discrete images blend into a single image in the human mind and [is] believed to be the explanation for motion perception in cinema and animated films.” (Source) One of the ways to demonstrate “persistence-of-vision” is by having students build a zoetrope or a praxinoscope. These early animation devices were the precursors of today’s movie projectors. (You can find instructions on how to construct both on the Internet.)

The zoetrope (above left) was invented in 1834 and uses slits through which one could view a strip of paper with several different images. The praxinoscope (above right) was invented in 1877 and improved on the zoetrope. It used a series of mirrors that reflect the images of the animation strip which is located inside the cylindrical device.

The zoetrope (above left) was invented in 1834 and uses slits through which one could view a strip of paper with several different images. The praxinoscope (above right) was invented in 1877 and improved on the zoetrope. It used a series of mirrors that reflect the images of the animation strip which is located inside the cylindrical device.

Both devices take advantage of persistence of vision because as they spin, and as we watch, our brain fills in the missing information between the images. The leap to early animated films (like Walt Disney’s early cartoons) is not hard to imagine after seeing these devices in action. Another popular way to help students grasp this science principle is via animated flip books. Students draw a series of images, in which each successive image differs slightly from the previous one. When combined together, and flipped with a thumb, a flipbook also demonstrates persistence of vision.

Both devices take advantage of persistence of vision because as they spin, and as we watch, our brain fills in the missing information between the images. The leap to early animated films (like Walt Disney’s early cartoons) is not hard to imagine after seeing these devices in action. Another popular way to help students grasp this science principle is via animated flip books. Students draw a series of images, in which each successive image differs slightly from the previous one. When combined together, and flipped with a thumb, a flipbook also demonstrates persistence of vision.

Animated Movies

Think back to those 24 frames-per-second. Now imagine you’re an animator who has to draw 24 separate images. If you’re making a stop-motion animated film, you must physically move your character 24 times to comprise one second. Yes, it’s time consuming. This explains why it took years to make such a feature film.

I recently visited the California Science Center to experience The Science Behind Pixar exhibition. The exhibit takes visitors through Pixar’s animated process and includes a number of hands-on manipulatives that help explain the science processes.

As you move through the exhibit, you begin to understand what modeling, rigging, surfaces, sets & cameras, lighting, animation, simulation, and rendering all mean and how they work together to create the final film we see on the screen.

Fortunately for educators the Pixar website includes downloadable lesson plans you can use with your students. You can also learn more about the Pixar process at Khan Academy’s Pixar In A Box series.

Recommendations: Go behind-the-scenes of the making of the Oscar-nominated stop-motion feature “Kubo and the Two Strings” and watch as the animators do their magic. And read: Middle School Animation Projects

3D Movies and Science

Before the advent of present-day 3D films, movie fans had to wear red and blue glasses in order to see the depth that a 3D film generates. Back then, two cameras (separated by a small distance) would shoot the same scene, and when you wore those red/blue glasses your brain would combine those images and you would experience the depth that is the centerpiece of 3D films.

Before the advent of present-day 3D films, movie fans had to wear red and blue glasses in order to see the depth that a 3D film generates. Back then, two cameras (separated by a small distance) would shoot the same scene, and when you wore those red/blue glasses your brain would combine those images and you would experience the depth that is the centerpiece of 3D films.

Today, when you put on a pair of 3D glasses, the lens are not red and blue: they’re polarized. “One lens allows only vertically polarized light to pass through, while the other allows only horizontally polarized light. Two projections show slightly different images, using light polarized in one or the other direction…. In this way each eye sees a different image just like you would if you were viewing the scene in real life.” Source

This scene from Avatar shows what a 3D movie would look like without the help of glasses:

![]()

Motion Capture Becomes Popular

Motion capture, or mo-cap for short, has become one of the most popular technologies in filmmaking today. Motion capture is “the process or technique of recording patterns of movement digitally, especially the recording of an actor’s movements for the purpose of animating a digital character in a movie or video game.” (Source)

Most notably, actor Andy Serkis (below) has championed the technology and has been captured using mo-cap science in films such as “Rise of the Planet of the Apes” and “The Hobbit” just to name a few. Serkis calls motion capture “digital makeup.”

Motion capture gives life-like qualities to the partially digitized characters on the screen, and it all starts with the actor’s performance, captured by cameras and computers.

How is science involved? Here’s an explanation:

“Motion capture transfers the movement of an actor to a digital character. Systems that use tracking cameras (with or without markers) can be referred to as ‘optical,’ while systems that measure inertia or mechanical motion are ‘non-optical.’ Optical systems work by tracking position markers or features in 3D and assembling the data into an approximation of the actor’s motion. Active systems use markers that light up or blink distinctively, while passive systems use inert objects like white balls or just painted dots (the latter is often used for face capture).

Markerless systems use algorithms from match-moving software to track distinctive features, like an actor’s clothing or nose, instead of markers. Once captured, motion is then mapped onto a virtual ‘skeleton’ of the animated character. The result? Animated characters that move like real-life performers.” (Source)

The rise of CGI

Another form of technology that’s also being used in the filmmaking process is computer-generated imagery (CGI).

A good example of how CGI is used in movies is the most recent Star Wars film Rogue One which had to recreate actor Peter Cushing for a key role. The actor played a major character in the 1977 film “A New Hope,” but passed away in 1994. The scriptwriters wrote him into the new film and then issued a challenge to the digital magic makers: bring Cushing’s character (Death Star commander Grand Moff Tarkin) back to life.

The process of using existing footage of the actor, and creating new scenes for him, was an extremely complicated process, all of which is explored in this video. The process is not without controversy. Read this interview with the director (spoiler warning!). Here’s a quote:

People “like things more and more the more human they get. Yet when they get (very) close to human, but they are not human, it feels wrong. It feels like a zombie, or evil, or like there’s something going on that we just don’t like. There is a falseness to that, like a trick, and we hate it. That drop is (called the) ‘uncanny valley.'”

Students might consider the question: what are the pros and cons of bringing a dead actor “back to life”? Or they might share a time when they have experienced the uncanny valley effect while watching a movie (The Polar Express has some good examples).

Students might consider the question: what are the pros and cons of bringing a dead actor “back to life”? Or they might share a time when they have experienced the uncanny valley effect while watching a movie (The Polar Express has some good examples).

Stunts and Other Special Effects

When you watch a movie and a car crashes and rolls over several times, or an actor jumps from a tall building, you’re probably not thinking about the science involved in those stunts. But Steve Wolf does think about the science.

As a stuntman and science educator, he teaches the science of stunts and special effects at schools across the country. He demonstrates how simple science is behind many of the things we see on the movie screen. For more, see his company website and his book. You can learn about his Science in the Movies presentations here.

Also check out the Science In Hollywood at the Sciences News for Kids website, which describes some specific work by scientists for the movies, including making realistic snow (great Disney short on snow simulation – it’s practically a character) in the Disney movie Frozen.

Future trends in filmmaking science

Filmmakers are continuing to push the boundaries. Directors James Cameron (“Avatar”) and Peter Jackson (“The Hobbit”) have already experimented by shooting their films at 48 fps. Most recently, Ang Lee shot a version of “Billy Lynn’s Long Halftime Walk” at 120 fps. The reasons they are moving away from 24 fps is something your students should explore.

One movie company is experimenting with a 180-degree wrap-around screen while others have seats that move. Some theatres feature wind machines and devices that deliver scents to the audience. To the theatre owners, it’s all about an engaging experience that will drive audiences back into the theatres, because many of us would rather watch movies at home on our HDTV screens with surround-sound systems.

One movie company is experimenting with a 180-degree wrap-around screen while others have seats that move. Some theatres feature wind machines and devices that deliver scents to the audience. To the theatre owners, it’s all about an engaging experience that will drive audiences back into the theatres, because many of us would rather watch movies at home on our HDTV screens with surround-sound systems.

For educators, teaching about the process of film-making is an important part of media literacy, science and technology education. With today’s students already acting as “filmmakers” using phone cameras and e-tablets, they will be the ones helping to push today’s boundaries with new media and technologies.

Frank Baker recently created a web page STEM/STEAM & The Movies as a resource for educators who want to engage students with videos and readings about the way filmmakers use science, technology, engineering, art and math in their productions.